p2pdma in Linux kernel 4.20-rc1 is here!

- Written by: Stephen Bates

On Sunday November 4th 2018 Linus Torvalds released the first candidate for the 4.20 Linux kernel and it includes the upstream version of the Eideticom Peer-to-Peer DMA (p2pdma) framework! This framework is an important part of the evolution of PCIe and NVM Express Computational Storage as it will allow NVMe and other PCIe devices to move data between themselves without having to DMA via CPU memory.

What is a P2P DMA?

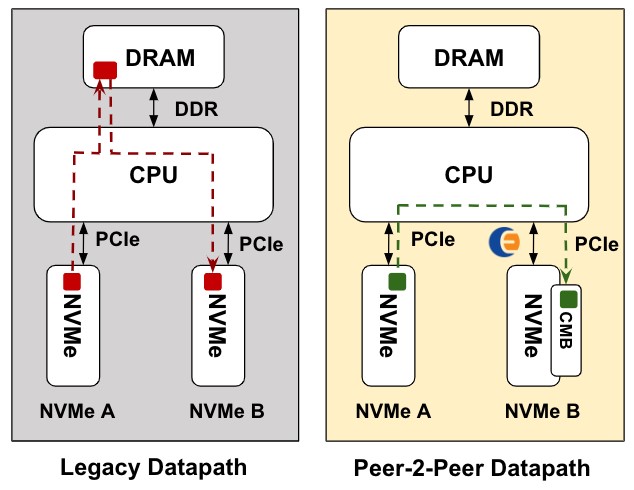

In Figure 1 we illustrate the difference between a legacy DMA copy between two PCIe devices and a P2PDMA copy.

Figure 1: A legacy DMA vs a P2PDMA. The P2PDMA avoids the CPU’s memory subsystem and can avoid the CPU entirely when PCIe switches are used.

p2pdma transfers bypass CPU memory and instead uses PCIe memory provided by one (or more) of the PCIe devices in the system (e.g., NVMe Controller Memory Buffer (CMB) or PCIe BAR). A P2P capable Root Complex or PCIe switch is needed (note the AMD EPYC processors have very good p2pdma capability). This p2pdma path has not been enabled in Operating Systems (OSes) until now.

P2pdma offers the following advantages over the legacy path:

- DMA traffic avoids the CPU’s memory subsystem which leaves that memory bandwidth available for applications.

- In systems with many PCIe devices the CPU memory subsystem can become the performance bottleneck. p2pdma avoids this problem.

- With good system design p2pdma performance can be scaled linearly as more devices are added with no risks of bottlenecks occuring.

- In most systems the latency of the DMA is improved.

Why do NVM Express and p2pdma play well together?

NVM Express SSDs are PCIe End Points (EPs) with a very well defined interface to the host CPU. NVMe EPs have the capability to support a new optional feature called Controller Memory Buffer (CMB) and this can be used as the PCIe memory for p2pdma. In the upstream kernel any NVMe SSD with a CMB that has Read Data Support (RDS) and Write Data Support (WDS) capability will register that memory against the p2pdma framework and can be used by other PCIe EPs for DMAs.

Our Eideticom NVMe NoLoad™ has a large and high performance CMB which we therefore leverage in p2pdma to move data into and out of our acceleration namespaces

Digging deeper into the p2pdma API

I could write a section going through the API for p2pdma but instead I can refer you to the excellent article by Marta Rybczyńska on lwn.net that covers this topic.

p2pdma currently works well on Intel and AMD CPU platforms and with additional patches we can get it up on ARM64 [1] and RISC-V [2]. We also have a simple p2p copy application which we use a lot for debug and performance testing [3] and fio also has support for using p2pdma memory via the iomap flag [4]. We also added support for NVMe models with CMBs a while back to the upstream QEMU [5].

[1] https://github.com/sbates130272/linux-p2pmem/commits/pci-p2p-v5-plus-ioremap

[2] https://github.com/sbates130272/linux-p2pmem/tree/riscv-p2p-sifive

[3] https://github.com/sbates130272/p2pmem-test

[4] https://github.com/axboe/fio/blob/master/HOWTO [see iomap mapshared option]

[5] https://github.com/qemu/qemu/commit/b2b2b67a0057407e19cfa3fdd9002db21ced8b01

What’s Next?

- In some ways, getting the p2pdma framework upstream is just the beginning. Right now only one driver acts as a p2pmem donator (the NVMe driver) and one one driver acts as a consumer and orchestrator (the nvmet driver for RDMA based NVMe over Fabrics).

- We have already been approached by several developers interested in getting their PCIe devices added to the framework (e.g. GPGPU and FPGA companies). It is possible p2pdma can be the basis for a legitimate upstream version of GPUDirect for example, which has failed to be accepted for several reasons over the years.

- Currently p2pdma is only consumable in-kernel. An out-of-tree patch exists to expose p2pmem to userspace but we need to decide on a upstreamable approach for user-space soon.

- Using p2pdma to enable NVMe device to device copies (i.e. Xcopy) has already been discussed at several Linux events and could be a prime candidate for another orchestration driver for p2pdma.